AI spend needs ownership, not just invoices

Why LLM token costs need the same ownership model as cloud infrastructure, and how engineering teams can connect spend to users, workflows, and decisions.

Cloud cost management improved when teams stopped treating the bill as a finance artifact and started assigning resources to owners. AI spend is reaching the same point.

The invoice is not enough

A provider invoice can show that AI usage went up, but it rarely explains the operating story behind the spend. Engineering leaders need to know which product feature, internal tool, workflow, model, prompt pattern, or team created the cost.

That gap matters because LLM usage is created by engineering decisions. Model choice, context size, output length, retries, caching, agent loops, and evaluation runs all change the bill. Without ownership, the only visible outcome is a larger number at the end of the month.

Cloud teams already learned this lesson

Infrastructure teams used to receive blended cloud bills with limited accountability. The practical fix was tagging, accounts, projects, environments, and resource ownership. Once spend had an owner, teams could review waste, forecast growth, and prioritize optimization work.

AI usage needs a similar ownership model. A token is not just a token. It belongs to a user, customer, team, service, feature, experiment, or background job. Cost management becomes useful when that relationship is visible.

- Cloud resources need tags and owners.

- LLM requests need actors and workflows.

- Budgets should map to teams and product surfaces.

- Optimization should be reviewed by the people who can change the code.

What AI cost ownership should include

The basic unit of AI cost ownership is a request with context. Each request should carry enough metadata to explain who made it, why it happened, which model served it, how many tokens were used, and whether the cost pattern is expected.

This does not mean logging every prompt by default. Many teams should begin with metadata, token counts, latency, status codes, model names, and workflow identifiers. Prompt and response logging should be controlled by policy.

- User, team, service, or customer attribution.

- Input, output, cached, and total token counts.

- Provider, model, latency, retries, and error data.

- Budget and rate-limit policy by workflow.

- Optional prompt logs with redaction and retention controls.

Why shared keys hide accountability

Shared provider keys make early experiments easy, but they flatten ownership. If multiple apps and developers use the same key, the provider dashboard cannot reliably separate production usage from testing, internal tooling, support automation, or evaluation jobs.

Managed gateway access gives teams a cleaner model. The organization can keep provider credentials secure while issuing internal access with actor identity, budgets, model rules, and telemetry.

How OggyCloud approaches the problem

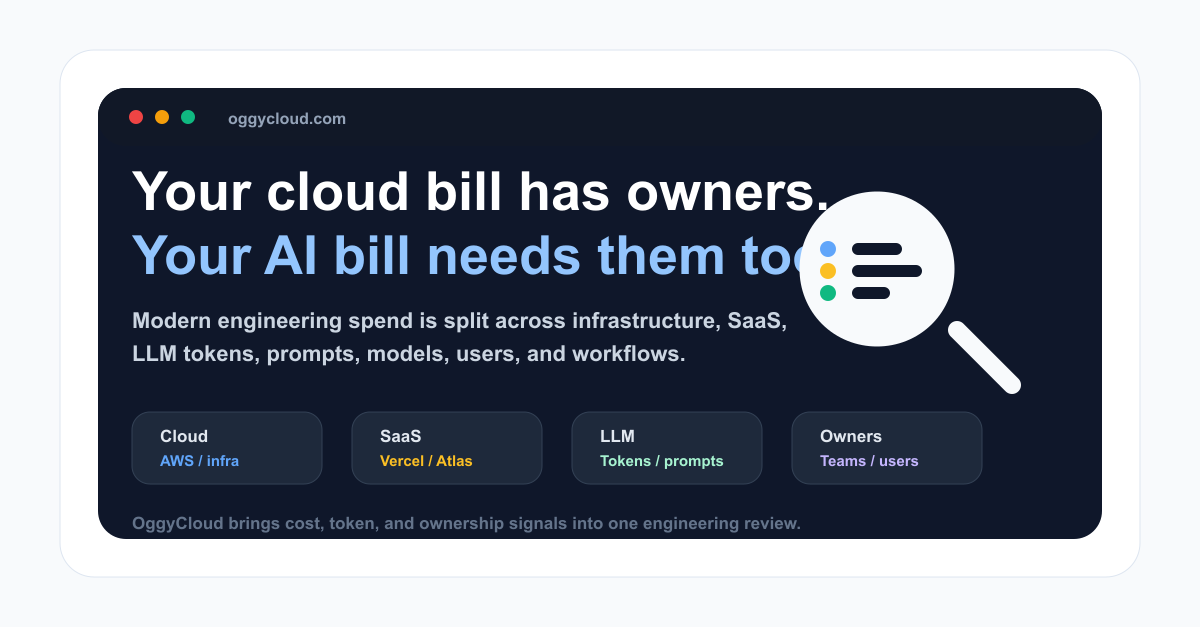

OggyCloud is built around the idea that modern engineering spend spans cloud infrastructure, SaaS platforms, and AI APIs. These costs should not live in separate operational silos when the same teams create and optimize them.

For AI usage, OggyCloud helps teams move toward managed keys, signed actor identity, token telemetry, prompt policy, and cost views by model, team, workflow, and provider. The same dashboard can also connect that AI spend with AWS resources, Vercel usage, MongoDB Atlas cost, and other platform signals.

- Route LLM traffic through a governed gateway.

- Attribute tokens and cost to owners.

- Review cloud, SaaS, and AI spend together.

- Turn cost spikes into engineering actions instead of invoice surprises.